Current publications and results of the consortium and the associated members of the Research Hub Neuroethics in the field of neuroethics are listed here.

Chronic stress is a global issue with detrimental effects on health and productivity, often leading individuals to adopt health-related coping strategies. This study uses an adapted Job Demands–Resources model to examine how various job demands and resources impact perceived stress and, consequently, the use of legal, prescription, and illegal drugs for enhancement purposes. Utilizing multiple waves from a nationwide sample of the working population in Germany (N = 7,705), structural equation models reveal that certain job demands increase perceived stress, while several resources mitigate it. Stress mediates the relationship between these factors and the use of legal and prescription drugs for cognitive enhancement. Illegal drug use was only directly impacted by selected job demands and resources. Thereby, this study expands the Job Demands–Resources model's applicability to include health-related behaviors like drug use. Practically, it calls for multidimensional strategies to prevent potentially health-endangering drug use, including structural improvements and individual interventions.

What is it to grieve? What is the nature of grief? An intuitive and straightforward answer to these questions, one that will be familiar to all of us, is that grief is an emotional reaction to the death of a close loved one. Grief is intimately connected to bereavement. However, grief can arise in situations beyond the death of a significant other and is revealed to be a much more complex and heterogeneous experience. People can grieve over all sorts of losses. What makes our response to these losses one of grief? We can understand all these losses as involving a loss of life possibilities, which impacts one's practical identity and who one takes oneself to be. Importantly, a close examination of the phenomenology of chronic pain helps illuminate the ways in which it also involves the kind of losses that we can grieve over. The losses involved in experiences of chronic pain impact one's practical identity in ways that can lead to grief. This chronic pain grief remains largely unrecognized, however. We outline four epistemic barriers to recognizing the grief involved in experiences of chronic pain.

Unresponsive Wakefulness Syndrome (UWS), a condition characterized by wakefulness without awareness, presents significant medical and moral uncertainties, particularly in end-of-life decision-making. Digital Brain Twins (DBTs), virtual replicas of patients’ brains driven by advanced artificial intelligence, offer the potential to alleviate medical uncertainties by providing precise diagnoses, prognoses, and experimental platforms for treatment testing. This paper provides a theoretical contribution by examining the potential ethical impact of these technologies in the context of UWS. We argue that, while DBTs promise greater diagnostic accuracy, personalized predictions of recovery, and non-invasive tools for exploring therapeutic interventions, they do not necessarily resolve the moral uncertainties faced by proxy decision-makers. Decisions about withdrawing or continuing life-sustaining treatment are fundamentally moral and value-laden, often extending beyond empirical evidence provided by DBTs. Factors such as cognitive biases, emotional distress, and subjective interpretations of best interest further complicate these decisions. While DBTs represent a breakthrough in precision medicine, their role in navigating the ethical complexities of UWS is limited, emphasizing the need for integrated approaches that combine technological innovation with ethical and psychological support for proxies.

In recent years, many sociological, psychological, and epidemiological studies have focused on the syndrome of social inequality, status concerns, and the emergence of social problems in affluent capitalist societies. The basic assumption is that increasing competitive pressures stress people and cause them to seek any small advantage in the status competition. Against this background, this study examines the relationship between status seeking, status anxiety, and a competitive working climate and the disposition to engage in self-optimizing behavior. Using representative survey data from the working population in Germany (N = 3551), we show that an individual's disposition to seek high status is positively associated with self-optimization dispositions in the form of a greater willingness to use prescription drugs without medical necessity with the aim of enhancing cognitive performance. This effect is moderated by status anxiety and a competitive working climate—that is, anxious status seekers and status seekers in competitive jobs are particularly willing to self-optimize. These findings underscore the importance of positive status experiences (feeling valued), especially for highly ambitious individuals, at the work place.

Neuromodulation refers to the targeted, continuous, and controllable modulation of neural processes with the aim of producing therapeutic effects. A prime example of neuromodulation is deep brain stimulation (DBS). DBS is most commonly used for movement disorders, but is increasingly being applied to other neurological and psychiatric conditions as well. This article introduces the fundamentals of neuromodulation and the criteria for its ethical evaluation. It then explains current clinical and ethical considerations of neuromodulation using DBS as an example. The challenges of the procedure are illustrated using examples of DBS for depression and its effects on interpersonal relationships. The article concludes with recommendations for research and the clinical advancement of DBS.

With advances in neurotechnology and its use for medical treatment and beyond, it is important to understand the public’s awareness of such technologies and potential disparities in self-reported knowledge, because knowledge is known to influence the acceptance and use of new technologies. This study utilizes a large sample (N = 10,339) to depict the existence and extent of self-reported knowledge of these neurotechnologies and to examine knowledge disparities between respondents. Results show that most respondents self-reported at least some knowledge of ultrasound and electroencephalography (EEG), but limited knowledge of BCIs. Prior use, being a healthcare professional, and health literacy increased the odds of self-reporting some knowledge. Also gender and age disparities exist. These findings may help identify uninformed groups in society and enhance information campaigns.

Culturally diverse societies often struggle with providing appropriate dementia care. Cultural sensitivity is considered an important prerequisite for meeting the needs of persons with dementia. This article discusses culture specific aspects of dementia care by referring to the Turkish community in Germany as an example. Factors are discussed that specifically infringe on the quality of dementia care for migrants. The article defends the claim that good dementia care for migrants can be provided through a person-centered approach which is again based on culturally sensitive approach. We show how culture shapes health phenomena but also argue that a focus on culture may stereotype individuals as belonging to a particular culture, grouping people together irrespective of their heterogeneity. Person-centered care is ideal for recognizing diverse needs and values. It is often seen as being at odds with culturally sensitive care, but this paper suggests a way of reconciling them. We argue that culture does indeed provide a framework to create the necessary foundation for person-centered care. Finally, some criticisms and plausible replies are discussed and practical implications arising from the analysis are presented.

Human augmentation is defined as the use of science or technology to modify human performance temporarily, or permanently, to exceed normal physical and/or psychological capabilities of a human body. Our previous work proposed nine ethical principles of human augmentation in the defence context: necessity, human dignity, informed consent, transparency and accountability, equity, privacy, ongoing review, international law, and broader social impact. Here we describe the results of a mixed-methods study using focus groups (NGroups = 9) and a web-based survey among serving military personnel (NParticipants = 43) examining how important and appropriate the participants thought the principles were when considering the development, adoption, and implementation of human augmentation technology. This study explores the participants’ stated reasons for their ratings, and the association with indicators of experience and socio-demographic groups. This work provides insights into how the principles can relate to each other at various stages of the technology life cycle, and how they could function together to support a thorough ethical analysis during the implementation of such technology. Following our analysis, several refinements to the principles are subsequently suggested.

While trust is foundational to the doctor-patient relationship, the introduction of AI into healthcare settings poses the risk of eroding this trust, and such erosion cannot be countered simply by appealing to the notion of “trustworthy AI.” We argue that trust presupposes specific epistemic attitudes that cannot be meaningfully applied to AI systems. Accordingly, our focus is not on specifying which capabilities AI must exhibit in order to appear trustworthy, but on examining from an epistemological perspective how the use of AI reshapes the dynamics of trust within the doctor-patient relationship. To this end, we first sketch conceptions of trust and demonstrate how trust differs from reliance. We then combine the model of Computational Reliabilism with an epistemic framework to develop a matrix for the ethical analysis of our use cases. Finally, we apply this framework to three scenarios of melanoma detection, risk prediction, and psychotherapy chatbots, which we construct by mapping epistemic stances across different modes of human-machine interaction, ranging from collaborative support with varying degrees of autonomy to the replacement of human-human interaction. We argue that the application of AI in the doctor-patient relationship exposes what we call a “reliability gap” — a conceptual space where the opaque nature of advanced AI systems prevents both doctors and patients from independently verifying their reliability. This creates a dynamic where reliability in the AI’s performance is increasingly mediated by the doctor as a proxy. Our use cases demonstrate that the more autonomous and opaque AI systems are, the more trust in the doctor becomes essential for bridging reliability gaps, while threatening to overburden the doctor’s central role.

There has been speculation about a growing demand for substances used without medical need for cognitive enhancement (CE). Thus, the prevalence rates and the identification of sociodemographic groups at risk of this behavior need further description and constant monitoring. We conducted a nationwide web-based representative sample (N = 22,101) (regarding sex, age, education, and federal state) of the general adult population in Germany. Results show a high past twelve months prevalence of consuming caffeinated drinks for CE (62.4% of respondents), followed by food supplements and home remedies (31.4%), and caffeine tablets (2.5%). The twelve-month prevalence of CE with prescription drugs was 3.7% (lifetime: 5.5%), of whom 29.1% reported using them 40 or more times; 40.5% of all respondents indicated some future intake willingness. Cannabis was the most frequently reported illegal drug for CE (past twelve months: 4.0%; lifetime: 10.7%), followed by the category amphetamine and methamphetamine (past twelve months: 1.0%; lifetime: 2.4%), and cocaine (past twelve months: 0.9; lifetime: 2.4%). We also show variation in the prevalence across multiple ascribed and achieved sociodemographic characteristics. These results can inform public policy and prevention strategies regarding the continued monitoring of the prevalence of CE and the identification of groups at risk of drug misuse.

The new European Medical Device Regulation (MDR), i.e. Regulation (EU) 2017/745, came into force in May 2021 and is being gradually implemented, with full compliance required for all medical devices on the EU market by May 2028. One key objective of the MDR is to regulate devices that, while technically being similar or even identical to medical devices, do not (yet) have an explicit clinical purpose. Under the previous regulatory framework, the Medical Device Directive (MDD), Non-Invasive Brain Stimulation (NIBS) devices with a medical purpose were classified as medical devices and subjected to regulatory control. However, when the same NIBS devices were used for non-medical purposes, such as cognitive enhancement in healthy individuals or experimental research on healthy participants, they were not regulated, despite posing the same risks. The new MDR aims to address this gap by extending regulatory control to such "non-medical devices", enhancing user safety through "more stringent” conformity assessment and post-market surveillance and ensuring that unsafe or non-compliant devices do not reach the public market.

Annex XVI of the MDR lists devices that fall under the scope of the law, even though they are lacking an intended medical purpose. The list includes: “Equipment intended for brain stimulation that apply electrical currents or magnetic or electromagnetic fields that penetrate the cranium to modify neural activity in the brain.“ This category encompasses all forms of transcranial electrical stimulation (tES), including direct current, alternating current and random noise stimulation (tDCS, tACS, and tRNS) and transcranial magnetic stimulation (TMS). Notably, neither the list in Annex XVI nor their reclassification include more recently introduced technologies that employ other physical principles to non-invasively stimulate the human brain, such as transcranial ultrasonic stimulation (TUS) or photobiomodulation (PBM). While Annex XVI had listed the transcranial electrical and magnetic stimulation technologies in 2017, the risk classification for these devices, when being used for non-medical purposes, remained unspecified for several years. This left industry and researchers in limbo as they awaited final decisions from the EU.

Under the MDR, medical devices are classified into four classes following a risk-based classification scheme, which links the class of the device to the potential risk posed to the patient's health due to performance faults. All medical devices are classified as class I, IIa, IIb, or III, with class III being the highest risk class. Class III devices are per definition those where a malfunction or failure of the device could result in death or serious injury. These are primarily devices intended for invasive surgical procedures, such as deep brain stimulators or pacemakers. Guidance documents, published in 2021, clarified that NIBS technologies used for clinical or medical purposes (and thus covered by the core of the MDR rather than Annex XVI), were classified as Class IIa (i.e., TMS and low intensity tES) or Class IIb (see MDCG 2021-24).3 It was therefore widely assumed that the classification of these types of NIBS devices would have the same or lower classification for non-medical use, as patients are generally considered to be at higher risk due to their overall health status, but this assumption proved incorrect, even the reverse came up.

While we agree that the risk-benefit ratio of NIBS devices may be higher and harder to control when used unsupervised by non-experts, the reclassification was based on the premise of “increased risk”. This has led to widespread confusion in the brain stimulation field, slowing research, leaving clinicians uncertain, and frustrating patients [1,2].

The aim of this paper is i) to review the scientific evidence on the basis of which reclassification was implemented and provide the relevant scientific proof that was omitted; ii) to summarize our efforts how we have tried to act against the reclassification; iii) to present clear, evidence-based findings to address existing ambiguities in the regulatory NIBS field, providing the scientific community with reliable information to guide further research and application. This is relevant as any risk assessment and claims of possible side and adverse effects should be based on scientific empirical data rather than speculation. The “saga” until now was mainly about procedures and regulatory processes and thus, on formalities. What is missing is a scientific review of the concrete articles and evidence put forward by the reclassification committee to justify their revised risk assessment of NIBS. The current article is written by leading experts, scientists who evaluated this evidence and provide a pure scientific assessment of the data we have available today.

While risk perceptions affect various health behaviors, there is insufficient knowledge about how they are formed and change over time surrounding illicit substance use. This study investigates the role of prior use, social influences, and media information on changes in the risk perceptions of expected susceptibility and severity of side effects in the context of the nonmedical use of prescription drugs for cognitive enhancement. It also examines differential updating by testing for the potential conditioning effects of prior use and self-control. We use a three-wave panel design (N = 8,377) with a nationwide random sample of adults in Germany. Fixed-effect regression models show that prior use and positive media information lower both risk perceptions, while negative information from others and the media produce increases. Rare users compared to non- and frequent users were more sensitive to new information obtained through others, thus showing stronger changes in risk perceptions. Moreover, self-control partially moderated the magnitude of changes in both risk perceptions, for example, regarding side effects reported in the media, which affected individuals with low self-control more strongly. In sum, the results indicate that personal and vicarious information affect the updating of risk perceptions, while partial evidence exists for differential updating.

Background

The use of artificial intelligence (AI) in psychiatry holds promise for diagnosis, therapy, and the categorization of mental disorders. At the same time, it raises significant theoretical and ethical concerns. The debate appears polarized, with proponents and critics seemingly irreconcilably opposed. On the one hand, AI is heralded as a transformative force poised to revolutionize psychiatric research and practice. On the other hand, it is depicted as a harbinger of dehumanization. To better understand this dichotomy, it is essential to identify and critically examine the underlying arguments. To what extent does the use of AI challenge the theoretical assumptions of psychiatric diagnostics? What implications does it have for patient care, and how does it influence the professional self-concept of psychiatrists?

Methods

To explore these questions, we conducted 15 semi-structured interviews with experts from psychiatry, computer science, and philosophy. The findings were analyzed using a structuring qualitative content analysis.

Results

The analysis focuses on the significance of AI for psychiatric diagnosis and care, as well as on its implications for the identity of psychiatry. We identified different lines of argument suggesting that expert views on AI in psychiatry hinge on the types of data considered relevant and on whether core human capacities in diagnosis and treatment are viewed as replicable by AI.

Conclusions

The results provide a mapping of diverse perspectives, offering a basis for more detailed analysis of theoretical and ethical issues of AI in psychiatry, as well as for the adaptation of psychiatric education.

Purpose

This study aims to demonstrate the importance of recognizing stress in the workplace. Accurate novel objective methods that use electroencephalogram (EEG) to measure brainwaves can promote employee well-being. However, using these devices can be positive and potentially harmful as manipulative practices undermine autonomy.

Design/methodology/approach

Emphasis is placed on business ethics as it relates to the ethics of action in terms of positive and negative responsibility, autonomous decision-making and self-determined work through a literature review. The concept of relational autonomy provides an orientation toward heteronomous employment relationships.

Findings

First, using digital devices to recognize stress and promote health can be a positive outcome, expanding the definition of digital well-being as opposed to dependency, non-use or reduction. Second, the transfer of socio-relational autonomy, according to Oshana, enables criteria for self-determined work in heteronomous employment relationships. Finally, the deployment and use of such EEG-based devices for stress detection can lead to coercion and manipulation, not only in interpersonal relationships, but also directly and more subtly through the technology itself, interfering with self-determined work.

Originality/value

Stress at work and EEG-based devices measuring stress have been discussed in numerous articles. This paper is one of the first to explore ethical considerations using these brain–computer interfaces from an employee perspective.

This book offers a comprehensive review of current topics in decision making. It covers new findings relating to foundations, mechanisms, and consequences of decisions, shedding light on the cognitive processes from different disciplinary perspectives. Chapters report on psychological studies of the cognitive mechanisms of decision making, neuroimaging studies on the neural correlates, studies of patient populations to characterize alterations in decision making in specific diseases, as well as discussions concerning philosophical and ethical issues.

In recent debates on digital twins, much attention has been paid to understanding the interaction between individuals and their digital representations (Braun, Citation2021). Iglesias et al. (Citation2025) shed new light on this debate, extending the reflection on digital doppelgängers—digital twins that try to replicate the psychological dimension of an individual. They argue that such copies may serve as valuable means to achieve legacy and relational aims left unaddressed due to the person’s death. Against this background, we discuss how far we may better understand the implied normative aspects by considering them in terms of the represented person’s death. Specifically, we ask how we can and should, in normative terms, deal with a digital twin as a representation of a person after their death.

Here, we consider the decommissioning of such technology. We define decommissioning as the withdrawal, dismantling, or rendering the doppelgänger incapable of serving its original aims. We hypothesize that the way in which these digital doppelgängers ought to be decommissioned may depend upon whether they are viewed either as a proxy or as an extension of personhood. By proxy, we mean a stand-in for an individual by replicating their decisions and style without embodying their personal identity or subjective experience; something that makes decisions on your behalf but is not you (Braun and Krutzinna Citation2022). What is left behind is akin to an artifact owned by you. Whereas an extension of personhood can mean extending aspects of an individual’s identity and relational presence beyond death by reflecting their values, projects, and relationships; something that is/was a part of yourself. What is left behind is akin to an “informational corpse” (Öhman and Floridi Citation2018).

Answering this decommissioning question is necessary not only to respect the intended aims of those for whom the digital doppelgängers were created, but also to potentially respect certain social norms surrounding obsequies. Viewing digital doppelgängers either as proxies or extensions of personhood implies respective normative notions. For instance, the pursuit of any decommissioning strategy will require necessary and sufficient standards of informed consent, which may be difficult to parse given that not all individuals will view their digital doppelgänger in the same manner. The decommissioning of digital doppelgängers is thus enriched by moral nuances influenced by the perceptions we may have of this technology.

Optogenetics has potentials for a treatment of retinitis pigmentosa and other rare degenerative retinal diseases. The technology allows controlling cell activity through combining genetic engineering and optical stimulation with light. First clinical studies are already being conducted, whereby the vision of participating patients who were blinded by retinitis pigmentosa was partially recovered. In view of the ongoing translational process, this paper examines regulatory aspects of preclinical and clinical research as well as a therapeutic application of optogenetics in ophthalmology. There is no prohibition or specific regulation of optogenetic methods in the European Union. Regarding preclinical research, legal issues related to animal research and stem cell research have importance. In clinical research and therapeutic applications, aspects of subjects' and patients' autonomy are relevant. Because at EU level, so far, no specific regulation exists for clinical studies in which a medicinal product and a medical device are evaluated simultaneously (combined studies) the requirements for clinical trials with medicinal products as well as those for clinical investigations on medical devices apply. This raises unresolved legal issues and is the case for optogenetic clinical studies, when for the gene transfer a viral vector classified as gene therapy medicinal product (GTMP) and for the light stimulation a device qualified as medical device are tested simultaneously. Medicinal products for optogenetic therapies of retinitis pigmentosa fulfill requirements for designation as orphan medicinal product, which goes along with regulatory and financial incentives. However, equivalent regulation does not exist for medical devices for rare diseases.

Virtual reality (VR) induces a radical psychological reorientation. Yet descriptions of this reorientation are often steeped in theoretically misleading metaphors. We offer a more measured account, grounded in both philosophy and cognitive psychology, and use it to access the claim that VR promotes moral learning by simulating another's perspective. This hypothesis depends on the assumption that avatar use produces experiences sufficiently similar to those of others to enable empathic growth. We reject that assumption and offer two arguments against it. Empathy relevant to moral learning requires interpretive effort and contextual understanding, not just a shift in perspective. And VR's open-ended, user-driven structure tends to reinforce prior assumptions rather than unsettle them. Still, avatar use may have a different effect on moral learning, which we call self-fragmentation. By loosening the boundaries of the self, VR may expand the range of people one is disposed to empathize with.

When making substituted judgments for incapacitated patients, surrogates often struggle to guess what the patient would want if they had capacity. Surrogates may also agonize over having the (sole) responsibility of making such a determination. To address such concerns, a Patient Preference Predictor (PPP) has been proposed that would use an algorithm to infer the treatment preferences of individual patients from population-level data about the known preferences of people with similar demographic characteristics. However, critics have suggested that even if such a PPP were more accurate, on average, than human surrogates in identifying patient preferences, the proposed algorithm would nevertheless fail to respect the patient's (former) autonomy since it draws on the 'wrong' kind of data: namely, data that are not specific to the individual patient and which therefore may not reflect their actual values, or their reasons for having the preferences they do. Taking such criticisms on board, we here propose a new approach: the Personalized Patient Preference Predictor (P4). The P4 is based on recent advances in machine learning, which allow technologies including large language models to be more cheaply and efficiently 'fine-tuned' on person-specific data. The P4, unlike the PPP, would be able to infer an individual patient's preferences from material (e.g., prior treatment decisions) that is in fact specific to them. Thus, we argue, in addition to being potentially more accurate at the individual level than the previously proposed PPP, the predictions of a P4 would also more directly reflect each patient's own reasons and values. In this article, we review recent discoveries in artificial intelligence research that suggest a P4 is technically feasible, and argue that, if it is developed and appropriately deployed, it should assuage some of the main autonomy-based concerns of critics of the original PPP. We then consider various objections to our proposal and offer some tentative replies.

Background

Artificial intelligence (AI) has revolutionized various healthcare domains, where AI algorithms sometimes even outperform human specialists. However, the field of clinical ethics has remained largely untouched by AI advances. This study explores the attitudes of anesthesiologists and internists towards the use of AI-driven preference prediction tools to support ethical decision-making for incapacitated patients.

Methods

A questionnaire was developed and pretested among medical students. The questionnaire was distributed to 200 German anesthesiologists and 200 German internists, thereby focusing on physicians who often encounter patients lacking decision-making capacity. The questionnaire covered attitudes toward AI-driven preference prediction, availability and utilization of Clinical Ethics Support Services (CESS), and experiences with ethically challenging situations. Descriptive statistics and bivariate analysis was performed. Qualitative responses were analyzed using content analysis in a mixed inductive-deductive approach.

Results

Participants were predominantly male (69.3%), with ages ranging from 27 to 77. Most worked in nonacademic hospitals (82%). Physicians generally showed hesitance toward AI-driven preference prediction, citing concerns about the loss of individuality and humanity, lack of explicability in AI results, and doubts about AI’s ability to encompass the ethical deliberation process. In contrast, physicians had a more positive opinion of CESS. Availability of CESS varied, with 81.8% of participants reporting access. Among those without access, 91.8% expressed a desire for CESS.

Physicians' reluctance toward AI-driven preference prediction aligns with concerns about transparency, individuality, and human-machine interaction. While AI could enhance the accuracy of predictions and reduce surrogate burden, concerns about potential biases, de-humanisation, and lack of explicability persist.

Conclusions

German physicians frequently encountering incapacitated patients exhibit hesitance toward AI-driven preference prediction but hold a higher esteem for CESS. Addressing concerns about individuality, explicability, and human-machine roles may facilitate the acceptance of AI in clinical ethics. Further research into patient and surrogate perspectives is needed to ensure AI aligns with patient preferences and values in complex medical decisions.

A significant amount of European basic and clinical neuroscience research includes the use of transcranial magnetic stimulation (TMS) and low intensity transcranial electrical stimulation (tES), mainly transcranial direct current stimulation (tDCS). Two recent changes in the EU regulations, the introduction of the Medical Device Regulation (MDR) (2017/745) and the Annex XVI have caused significant problems and confusions in the brain stimulation field. The negative consequences of the MDR for non-invasive brain stimulation (NIBS) have been largely overlooked and until today, have not been consequently addressed by National Competent Authorities, local ethical committees, politicians and by the scientific communities. In addition, a rushed bureaucratic decision led to seemingly wrong classification of NIBS products without an intended medical purpose into the same risk group III as invasive stimulators.

Overregulation is detrimental for any research and for future developments, therefore researchers, clinicians, industry, patient representatives and an ethicist were invited to contribute to this document with the aim of starting a constructive dialogue and enacting positive changes in the regulatory environment.

This volume focuses on the ethical dimensions of the technological framework in which human thought and action is embedded and which has been brought into the focus of cognitive science by theories of situated cognition. There is a broad spectrum of technologies that co-actualize or enable and reinforce human cognition and action and that differ in the degree of physical integration, interactivity, adaptation processes, dependency and indispensability, etc. This technological framework of human cognition and action is evolving rapidly. Some changes are continuous, others are eruptive. Technologies that use machine learning, for example, could represent a qualitative leap in the technological framework of human cognition and action. The ethical consequences of applying theories of situated cognition to practical cases have not yet received adequate attention and are explored in this volume.

Non-invasive brain stimulation (NIBS) techniques such as transcranial direct current stimulation (tDCS) or transcranial magnetic stimulation (TMS) have made great progress in recent years and offer boundless potential for the neuroscientific research and treatment of disorders. However, the possible use of NIBS devices for neuro-doping and neuroenhancement in healthy individuals and the military are poorly regulated. The great potentials and diverse applications can have an impact on the future development of the technology and society. This participatory study therefore aims to summarize the perspectives of different stakeholder groups with the help of qualitative workshops. Nine qualitative on-site and virtual workshops were conducted in the study with 91 individuals from seven stakeholder groups: patients, students, do-it-yourself home users of tDCS, clinical practitioners, industry representatives, philosophers, and policy experts. The co-creative and design-based workshops were tailored to each group to document the wishes, fears, and general comments of the participants. The outlooks from each group were collected in written form and summarized into different categories. The result is a comprehensive overview of the different aspects that need to be considered in the field of NIBS. For example, several groups expressed the wish for home-based tDCS under medical supervision as a potential therapeutic intervention and discussed the associated technical specifications. Other topics that were addressed were performance enhancement for certain professional groups, training requirements for practitioners, and questions of agency, among others. This qualitative participatory research highlights the potential of tDCS and repetitive TMS as alternative therapies to medication, with fewer adverse effects and home-based use for tDCS. The ethical and societal impact of the abuse of NIBS for non-clinical use must be considered for policy-making and regulation implementations. This study adds to the neuroethical debate on the responsible use and application of NIBS technologies, taking into consideration the different perspectives of important stakeholders in the field.

Machine learning (ML) has significantly enhanced the abilities of robots, enabling them to perform a wide range of tasks in human environments and adapt to our uncertain real world. Recent works in various ML domains have highlighted the importance of accounting for fairness to ensure that these algorithms do not reproduce human biases and consequently lead to discriminatory outcomes. With robot learning systems increasingly performing more and more tasks in our everyday lives, it is crucial to understand the influence of such biases to prevent unintended behavior toward certain groups of people. In this work, we present the first survey on fairness in robot learning from an interdisciplinary perspective spanning technical, ethical, and legal challenges. We propose a taxonomy for sources of bias and the resulting types of discrimination due to them. Using examples from different robot learning domains, we examine scenarios of unfair outcomes and strategies to mitigate them. We present early advances in the field by covering different fairness definitions, ethical and legal considerations, and methods for fair robot learning. With this work, we aim to pave the road for groundbreaking developments in fair robot learning.

Numerous complex and multi-faceted ethical questions arise from the innovation of neurotechnologies. Addressing these issues effectively requires the involvement of a diverse range of stakeholders, including patients, treatment providers, home users, scientists and engineers from different disciplines, and industry representatives. Different groups, however, possess varying levels of knowledge and experience regarding the ethical use and innovation of neurotechnologies. Therefore, customized methods are needed to identify their perspectives and ethical concerns. This article aims to introduce practical methods for eliciting ethical questions in the field of neurotechnology, including user journeys, persona approaches, material thinking, scenario building, fictional media contributions, and categorization.

With regard to the dual-use problem, digitalisation in the life sciences has two distinct influences, namely an exacerbating and an expanding one. By enabling faster and more extensive research and development processes, digitalisation exacerbates the existing dual-use problem because it also increases the speed at which the results of this research can be used for security-relevant purposes. In addition, the digitalisation of the life sciences extends the dual-use problem, as some of the digital tools that are developed and used in the life sciences can themselves be used for military or security-related purposes.

Broad-based governance is therefore required, including broad stakeholder participation in the research process and the provision of information on dual use in education in good scientific practice across institutions, career stages and disciplines.

In this letter to the editor, Antal and Baeken respond to a commentary by Rethwilm et al. on their position paper regarding the impact of the new EU Medical Device Regulation (MDR) on non-invasive brain stimulation (NIBS) research. While the authors welcome the commentators’ general agreement, they highlight ongoing challenges due to inconsistent interpretations of the MDR across countries and ethics committees. A major issue is that local ethics boards often lack legal or technical expertise, leading to bureaucratic and overly cautious decisions. Antal and Baeken call for clearer, binding guidelines and standardized procedures to harmonize research practices and reduce unnecessary barriers. The letter emphasizes the urgent need to clarify regulatory ambiguities to safeguard the future of NIBS research in Europe.

Phenomenological interview methods (PIMs) have become important tools for investigating subjective, first-person accounts of the novel experiences of people using neurotechnologies. Through the deep exploration of personal experience, PIMs help reveal both the structures shared between and notable differences across experiences. However, phenomenological methods vary on what aspects of experience they aim to capture and what they may overlook. Much discussion of phenomenological methods has remained within the philosophical and broader bioethical literature. Here, we begin with a conceptual primer and preliminary guide for using phenomenological methods to investigate the experiences of neural device users.

To improve and expand the methodology of phenomenological interviewing, especially in the context of the experience of neural device users, we first briefly survey three different PIMs, to demonstrate their features and shortcomings. Then we argue for a critical phenomenology—rejecting the ‘neutral’ phenomenological subject—that encompasses temporal and ecological aspects of the subjects involved, including interviewee and interviewer (e.g. age, gender, social situation, bodily constitution, language skills, potential cognitive disease-related impairments, traumatic memories) as well as their relationality to ensure embedded and situated interviewing. In our view, PIMs need to be based on a conception of experience that includes and emphasizes the relational and situated, as well as the anthropological, political and normative dimensions of embodied cognition.

We draw from critical phenomenology and trauma-informed qualitative work to argue for an ethically sensitive interviewing process from an applied phenomenological perspective. Drawing on these approaches to refine PIMs, researchers will be able to proceed more sensitively in exploring the interviewee’s relationship with their neuroprosthetic and will consider the relationship between interviewer and interviewee on both interpersonal and social levels.

As part of the ERANET NEURON initiative, this study conducted participatory workshops with seven stakeholder groups—including patients, clinicians, companies, home users, policy experts, and philosophers—to capture their perspectives, wishes, and concerns regarding non-invasive brain stimulation (NIBS). The qualitative findings were evaluated by an interdisciplinary expert panel and translated into recommendations for policymakers, health authorities, researchers, and industry.

The study reveals that access to NIBS in Europe is limited by unequal reimbursement policies and regulatory barriers, particularly the classification of NIBS devices as high-risk (Class III). The authors advocate for broader approval of tDCS for home use, clear standards for neurodata privacy, and EU-wide harmonization of certification and research funding. Emphasis is placed on the importance of involving all relevant stakeholders in decision-making processes to address ethical, legal, and practical challenges responsibly. The results highlight the potential of NIBS for medicine and society while underscoring the urgent need for structural adaptations.

This paper explores the potential of community-led participatory research for value orientation in the development of medical AI systems. First, conceptual aspects of participation and sharing, the current paradigms of AI development in medicine and the new challenges of AI technologies are examined. The paper proposes a shift towards a more participatory and community-led approach to AI development, illustrated by various participatory research methods in the social sciences and design thinking. It discusses the prevailing paradigms of ethics by design and embedded ethics in value customization of medical technologies and acknowledges their limitations and criticisms. It then presents a model for community-led development of AI in medicine that emphasizes the importance of involving communities at all stages of the research process. The paper argues for a shift towards a more participatory and community-led approach to AI development in medicine, which promises more effective and ethical medical AI systems.

Recent work on ecological accounts of moral responsibility and agency have argued for the importance of social environments for moral reasons responsiveness. Moral audiences can scaffold individual agents’ sensitivity to moral reasons and their motivation to act on them, but they can also undermine it. In this paper, we look at two case studies of ‘scaffolding bad’, where moral agency is undermined by social environments: street gangs and online incel communities. In discussing these case studies, we draw both on recent situated cognition literature and on scaffolded responsibility theory. We show that the way individuals are embedded into a specific social environment changes the moral considerations they are sensitive to in systematic ways because of the way these environments scaffold affective and cognitive processes, specifically those that concern the perception and treatment of ingroups and outgroups. We argue that gangs undermine reasons responsiveness to a greater extent than incel communities because gang members are more thoroughly immersed in the gang environment.

The concept of autonomy is indispensable in the history of Western thought. At least that’s how it seems to us nowadays. However, the notion has not always had the outstanding significance that we ascribe to it today and its exact meaning has also changed considerably over time. In this paper, we want to shed light on different understandings of autonomy and clearly distinguish them from each other. Our main aim is to contribute to conceptual clarity in (interdisciplinary) discourses and to point out possible pitfalls of conceptual pluralism.

Trustworthy medical AI requires transparency about the development and testing of underlying algorithms to identify biases and communicate potential risks of harm. Abundant guidance exists on how to achieve transparency for medical AI products, but it is unclear whether publicly available information adequately informs about their risks. To assess this, we retrieved public documentation on the 14 available CE-certified AI-based radiology products of the II b risk category in the EU from vendor websites, scientific publications, and the European EUDAMED database. Using a self-designed survey, we reported on their development, validation, ethical considerations, and deployment caveats, according to trustworthy AI guidelines. We scored each question with either 0, 0.5, or 1, to rate if the required information was “unavailable”, “partially available,” or “fully available.” The transparency of each product was calculated relative to all 55 questions. Transparency scores ranged from 6.4% to 60.9%, with a median of 29.1%. Major transparency gaps included missing documentation on training data, ethical considerations, and limitations for deployment. Ethical aspects like consent, safety monitoring, and GDPR-compliance were rarely documented. Furthermore, deployment caveats for different demographics and medical settings were scarce. In conclusion, public documentation of authorized medical AI products in Europe lacks sufficient public transparency to inform about safety and risks. We call on lawmakers and regulators to establish legally mandated requirements for public and substantive transparency to fulfill the promise of trustworthy AI for health.

The debate regarding prediction and explainability in artificial intelligence (AI) centers around the trade-off between achieving high-performance accurate models and the ability to understand and interpret the decisionmaking process of those models. In recent years, this debate has gained significant attention due to the increasing adoption of AI systems in various domains, including healthcare, finance, and criminal justice. While prediction and explainability are desirable goals in principle, the recent spread of high accuracy yet opaque machine learning (ML) algorithms has highlighted the trade-off between the two, marking this debate as an inter-disciplinary, inter-professional arena for negotiating expertise. There is no longer an agreement about what should be the “default” balance of prediction and explainability, with various positions reflecting claims for professional jurisdiction. Overall, there appears to be a growing schism between the regulatory and ethics-based call for explainability as a condition for trustworthy AI, and how it is being designed, assimilated, and negotiated. The impetus for writing this commentary comes from recent suggestions that explainability is overrated, including the argument that explainability is not guaranteed in human healthcare experts either. To shed light on this debate, its premises, and its recent twists, we provide an overview of key arguments representing different frames, focusing on AI in healthcare.

Position papers on artificial intelligence (AI) ethics are often framed as attempts to work out technical and regulatory strategies for attaining what is commonly called trustworthy AI. In such papers, the technical and regulatory strategies are frequently analyzed in detail, but the concept of trustworthy AI is not. As a result, it remains unclear. This paper lays out a variety of possible interpretations of the concept and concludes that none of them is appropriate. The central problem is that, by framing the ethics of AI in terms of trustworthiness, we reinforce unjustified anthropocentric assumptions that stand in the way of clear analysis. Furthermore, even if we insist on a purely epistemic interpretation of the concept, according to which trustworthiness just means measurable reliability, it turns out that the analysis will, nevertheless, suffer from a subtle form of anthropocentrism. The paper goes on to develop the concept of strange error, which serves both to sharpen the initial diagnosis of the inadequacy of trustworthy AI and to articulate the novel epistemological situation created by the use of AI. The paper concludes with a discussion of how strange error puts pressure on standard practices of assessing moral culpability, particularly in the context of medicine.

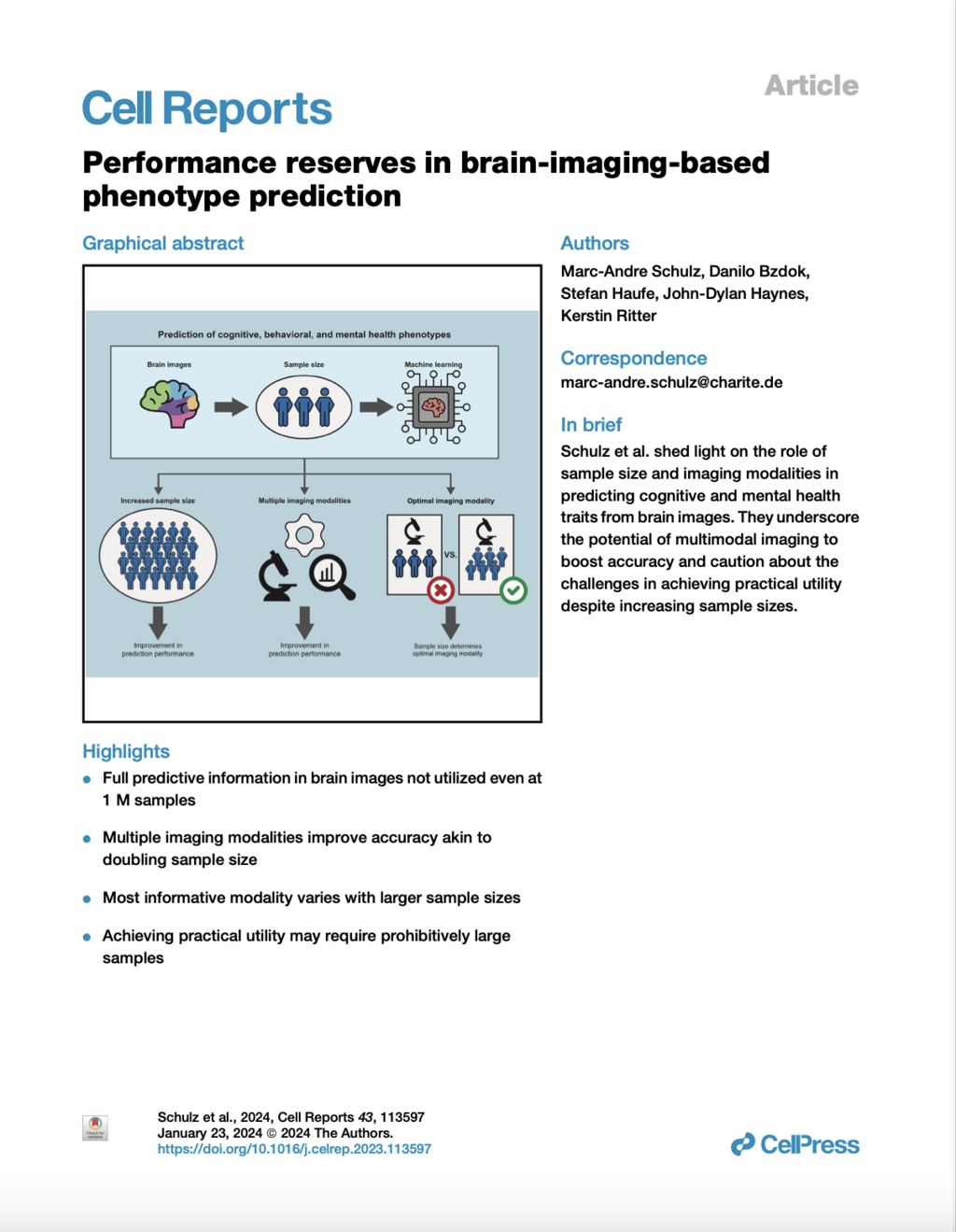

This study examines the impact of sample size on predicting cognitive and mental health phenotypes from brain imaging via machine learning. Our analysis shows a 3- to 9-fold improvement in prediction performance when sample size increases from 1,000 to 1 M participants. However, despite this increase, the data suggest that prediction accuracy remains worryingly low and far from fully exploiting the predictive potential of brain imaging data. Additionally, we find that integrating multiple imaging modalities boosts prediction accuracy, often equivalent to doubling the sample size. Interestingly, the most informative imaging modality often varied with increasing sample size, emphasizing the need to consider multiple modalities. Despite significant performance reserves for phenotype prediction, achieving substantial improvements may necessitate prohibitively large sample sizes, thus casting doubt on the practical or clinical utility of machine learning in some areas of neuroimaging.

Deep Learning (DL) has emerged as a powerful tool in neuroimaging research. DL models predicting brain pathologies, psychological behaviors, and cognitive traits from neuroimaging data have the potential to discover the neurobiological basis of these phenotypes. However, these models can be biased by spurious imaging artifacts or by the information about age and sex encoded in the neuroimaging data. In this study, we introduce a lightweight and easy-to-use framework called ‘DeepRepViz’ designed to detect such potential confounders in DL model predictions and enhance the transparency of predictive DL models. DeepRepViz comprises two components - an online visualization tool (available at https://deep-rep-viz.vercel.app/) and a metric called the ‘Con-score’. The tool enables researchers to visualize the final latent representation of their DL model and qualitatively inspect it for biases. The Con-score, or the ‘concept encoding’ score, quantifies the extent to which potential confounders like sex or age are encoded in the final latent representation and influences the model predictions. We illustrate the rationale of the Con-score formulation using a simulation experiment. Next, we demonstrate the utility of the DeepRepViz framework by applying it to three typical neuroimaging-based prediction tasks (n = 12000). These include (a) distinguishing chronic alcohol users from controls, (b) classifying sex, and (c) predicting the speed of completing a cognitive task known as ‘trail making’. In the DL model predicting chronic alcohol users, DeepRepViz uncovers a strong influence of sex on the predictions (Con-score = 0.35). In the model predicting cognitive task performance, DeepRepViz reveals that age plays a major role (Con-score = 0.3). Thus, the DeepRepViz framework enables neuroimaging researchers to systematically examine their model and identify potential biases, thereby improving the transparency of predictive DL models in neuroimaging studies.

Various forms of brain stimulation are used to treat different neurological and psychiatric diseases, as well as for research purposes. In legal discourse, the focus has so far been predominantly on deep brain stimulation. In contrast, there is a lack of discussion on methods of non-invasive brain stimulation. Against the backdrop of the legal framework changed by Regulation (EU) 2017/745, this article first provides a classification under medical device law. It then explains issues of information and consent. Additionally, it presents the legal aspects of using AI in the context of non-invasive and deep brain stimulation. Finally, it provides an outlook on the methods of neural optogenetics that are still under development.

How does knowing another team member is cognitively impaired or enhanced affect people's motivation to contribute to the team's performance? Building on the Effects of Grouping on Impairments and Enhancements (GIE) framework, we conducted two between-subjects experiments (Ntotal = 2,352) with participants from a representative, nationwide sample of the working population in Germany. We found that another group member's impairment (sleep deprivation) and enhancement (taking enhancement drugs) lowered participants’ intentions to contribute to the team's performance. These effects were mediated by lowered perceived competence (enhancement and impairment) and warmth (only enhancement) of the other group member. The reason for being impaired or enhanced (altruistic vs. egoistic reason) moderated the indirect effect of the impairment on intended effort via warmth. Our results illustrate that people's work motivation is influenced by the psychophysiological states of other group members. Hence, the enhancement of one group member can have the paradoxical effect of impairing the performance of another.

In December 2022, the European Union (EU) unexpectedly reclassified repetitive transcranial magnetic stimulation (rTMS) and transcranial electrical stimulation (tES) as Class III medical devices—the highest risk category, typically reserved for invasive procedures such as deep brain stimulation. This decision is based on a flawed risk assessment that contradicts scientific evidence: Current studies and meta-analyses confirm that rTMS and tES are safe when used correctly, with only mild and rare adverse effects. The EU’s justification includes factually incorrect claims, such as NIBS causing “atypical brain development” or “abnormal brain activity,” which are unsupported by data.

The reclassification process lacked adequate consultation with experts or NIBS manufacturers and was finalized after an eight-week public hearing that received only 22 comments—most unrelated to NIBS. This decision has severe consequences: Research projects face delays, development costs rise, and European patients risk losing access to established non-pharmacological therapies. The European Society for Brain Stimulation (ESBS) warns that this move threatens Europe’s leading role in NIBS research and demands a reversal to Class IIa (moderate risk), which aligns with the actual safety profile of NIBS. A protest letter has already been submitted to the EU; further details and support are available on the ESBS website.

As brain-computer interfaces are promoted as assistive devices, some researchers worry that this promise to “restore” individuals worsens stigma toward disabled people and fosters unrealistic expectations. In three web-based survey experiments with vignettes, we tested how refusing a brain-computer interface in the context of disability affects cognitive (blame), emotional (anger), and behavioral (coercion) stigmatizing attitudes (Experiment 1, N = 222) and whether the effect of a refusal is affected by the level of brain-computer interface functioning (Experiment 2, N = 620) or the risk of malfunctioning (Experiment 3, N = 620). We found that refusing a brain-computer interface increased blame and anger, while brain-computer interface functioning did change the effect of a refusal. Higher risks of device malfunctioning partially reduced stigmatizing attitudes and moderated the effect of refusal. This suggests that information about disabled people who refuse a technology can increase stigma toward them. This finding has serious implications for brain-computer interface regulation, media coverage, and the prevention of ableism.

The articles in this volume examine the extent to which current developments in the field of artificial intelligence (AI) are leading to new types of interaction processes and changing the relationship between humans and machines. First, new developments in AI-based technologies in various areas of application and development are presented. Subsequently, renowned experts discuss novel human-machine interactions from different disciplinary perspectives and question their social, ethical and epistemological implications. The volume sees itself as an interdisciplinary contribution to the sociopolitically pressing question of how current technological changes are altering human-machine relationships and what consequences this has for thinking about humans and technology.

Based on assumptions of the Job Demands-Resources model, we investigated employees’ willingness to use prescription drugs such as methylphenidate and modafinil for nonmedical purposes to enhance their cognitive functioning as a response to strain (i.e., perceived stress) that is induced by job demands (e.g., overtime, emotional demands, shift work, leadership responsibility). We also examined the direct and moderating effects of resources (e.g., emotional stability, social and instrumental social support) in this process. We utilized data from a representative survey of employees in Germany (N = 6454) encompassing various job demands and resources, levels of perceived stress, and willingness to use nonmedical drugs for performance enhancement purposes. By using Structural Equation Models, we found that job demands (such as overtime and emotional demands) and a scarcity of resources (such as emotional stability) increased strain, consequently directly and indirectly increasing the willingness to use prescription drugs for cognitive enhancement. Moreover, emotional stability reduced the effect of certain demands on strain. These results delivered new insights into mechanisms behind nonmedical prescription drug use that can be used to prevent such behaviour and potential negative health consequences.

Will neuroscientists soon be able to read minds thanks to brain imaging techniques? We cannot answer this question without knowing the state of the art in neuroimaging. But neither can we answer this question without having some understanding of the concept to which the term "mind reading" refers. This article is an attempt to develop such an understanding. Our analysis takes place in two stages. In the first stage, we provide a categorical explanation of mindreading. The categorical explanation formulates empirical conditions that must be met for mindreading to be possible. In the second phase, we develop a benchmark for assessing the performance of mindreading experiments. The resulting conceptualization of mindreading helps to reconcile folk psychological judgments about what mindreading must entail with the constraints imposed by empirical strategies for achieving it.

While the nonmedical use of prescription drugs to enhance cognitive performance (NMUPD-CE) has received increasing media attention and provoked ethical debates, the social drivers of misusing this health-related drug remain understudied. Therefore, this study examined how descriptive and injunctive norms as social influences affect decisions to engage in NMUPD-CE. We tested competing assumptions about whether moral acceptability and positive and negative outcome expectations mediate or moderate the social norms effects. We used data from a Germany-wide, web-based survey with a sample of adult nonusers who were recruited offline (N= 13,443). We found that 62.09% of the respondents indicated at least some willingness for NMUPD-CE. Positive associations occurred between this willingness and both social norms, high positive and low negative outcome expectations, as well as higher moral acceptability. Moral acceptability and positive outcome expectations partially mediated both social norm effects, while negative outcome expectations only partially mediated injunctive norms. Moreover, positive and negative outcome expectations also moderated both social norm effects. This study provides insights into the understanding of social influence in the context of substance misuse and beyond. It suggests that social norms operate via moral acceptability and outcome expectations, while outcome expectations also lead to differential effects of social norms.

Predicting brain age is a relatively new tool in neuromedicine and neuroscience. It is widely used in research and clinical practice as a marker for biological age, for the general health of the brain and as an indicator of various brain-related disorders. Its usefulness in all these tasks depends on detecting outliers and thus not correctly predicting chronological age. The indicative value of age prediction comes from the gap between the chronological age of a brain and the predicted age, the "Brain Age Gap" (BAG). This article shows how the clinical and scientific application of brain age prediction tacitly pathologizes the conditions it addresses. It is argued that the tacit nature of this transformation obscures the need for its explicit justification.

Introduction: Moral judgment is of critical importance in the work context because of its implicit or explicit omnipresence in a wide range of work-place practices. The moral aspects of actual behaviors, intentions, and consequences represent areas of deep preoccupation, as exemplified in current corporate social responsibility programs, yet there remain ongoing debates on the best understanding of how such aspects of morality (behaviors, intentions, and consequences) interact. The ADC Model of moral judgment integrates the theoretical insights of three major moral theories (virtue ethics, deontology, and consequentialism) into a single model, which explains how moral judgment occurs in parallel evaluation processes of three different components: the character of a person (Agent-component); their actions (Deed-component); and the consequences brought about in the situation (Consequences-component). The model offers the possibility of overcoming difficulties encountered by single or dual-component theories.

Methods: We designed a 2 × 2 × 2-between-subjects design vignette experiment with a Germany-wide sample of employed respondents (N = 1,349) to test this model.

Results: Results showed that the Deed-component affects willingness to cooperate in the work context, which is mediated via moral judgments. These effects also varied depending on the levels of the Agent- and Consequences-component.

Discussion: Thereby, the results exemplify the usefulness of the ADC Model in the work context by showing how the distinct components of morality affect moral judgment.

The rise of neurotechnologies, especially in combination with artificial intelligence (AI)-based methods for brain data analytics, has given rise to concerns around the protection of mental privacy, mental integrity and cognitive liberty – often framed as “neurorights” in ethical, legal, and policy discussions. Several states are now looking at including neurorights into their constitutional legal frameworks, and international institutions and organizations, such as UNESCO and the Council of Europe, are taking an active interest in developing international policy and governance guidelines on this issue. However, in many discussions of neurorights the philosophical assumptions, ethical frames of reference and legal interpretation are either not made explicit or conflict with each other. The aim of this multidisciplinary work is to provide conceptual, ethical, and legal foundations that allow for facilitating a common minimalist conceptual understanding of mental privacy, mental integrity, and cognitive liberty to facilitate scholarly, legal, and policy discussions.

This study contributes to the emerging literature on public perceptions of neurotechnological devices (NTDs) in their medical and non-medical applications, depending on their invasiveness, framing effects, and interindividual differences related to personal needs and values. We conducted two web-based between-subject experiments (2×2×2) using a representative, nation-wide sample of the adult population in Germany. Using vignettes describing how two NTDs, brain stimulation devices (BSDs; NExperiment 1 = 1,090) and brain-computer interfaces (BCIs; NExperiment 2 = 1,089), function, we randomly varied the purpose (treatment vs. enhancement) and invasiveness (noninvasive vs. invasive) of the NTD, and assessed framing effects (variable order of assessing moral acceptability first vs. willingness to use first). We found a moderate moral acceptance and willingness to use BSDs and BCIs. Respondents preferred treatment over enhancement purposes and noninvasive over invasive devices. We also found a framing effect and explored the role of personal characteristics as indicators of personal needs and values (e.g., stress, religiosity, and gender). Our results suggest that the future demand for BSDs or BCIs may depend on the purpose, invasiveness, and personal needs and values. These insights can inform technology developers about the public’s needs and concerns, and enrich legal and ethical debates.

Researchers in applied ethics, and particularly in some areas of bioethics, aim to develop concrete and appropriate recommendations for action in morally relevant situations in the real world. However, in moving from more abstract levels of ethical reasoning to such concrete recommendations, it seems possible to develop divergent or even contradictory recommendations for action in a given situation, even in relation to the same normative principle or norm. This may give the impression that such recommendations are arbitrary and thus not well founded. Against this background, we first want to show that ethical recommendations for action, while contingent to some extent, are not arbitrary if they are developed in an appropriate way. To this end, we examine two types of contingencies that arise in applied ethical reasoning using recent examples of recommendations for action in the context of the COVID-19 pandemic. In doing so, we refer to a three-stage model of ethical reasoning for recommendations for action. However, this leaves open the question of how applied ethics can deal with contingent recommendations for action. Therefore, in a second step, we analyze the role of bridging principles for the development of ethically appropriate recommendations for action, i.e., principles that combine normative claims with relevant empirical information to justify certain recommendations for action in a given morally relevant situation. Finally, we discuss some implications for reasoning and reporting in empirically informed ethics.

This study examines how work stress affects the misuse of prescription drugs to augment mental performance without medical necessity (i.e., cognitive enhancement). Based on the effort–reward imbalance model, it can be assumed that a misalignment of effort exerted and rewards received increases prescription drug misuse, especially if employees overcommit. To test these assumptions, we conducted a prospective study using a nationwide web-based sample of the working population

in Germany (N = 11,197). Effort, reward, and overcommitment were measured at t1 and the 12 month frequency of prescription drug misuse for enhancing cognitive performance was measured at a one-year follow-up (t2). The results show that 2.6% of the respondents engaged in such drug misuse, of which 22.7% reported frequent misuse. While we found no overall association between misuse frequency and effort, reward, or their imbalance, overcommitment was significantly associated with

a higher misuse frequency. Moreover, at low levels of overcommitment, more effort and an effort–reward imbalance discouraged future prescription drug misuse, while higher overcommitment, more

effort, and an imbalance increased it. These findings suggest that a stressful work environment is a risk factor for health-endangering behavior, and thereby underlines the importance of identifying groups at risk of misusing drugs.

Researchers in applied ethics, and particularly in some areas of bioethics, aim to develop concrete and appropriate recommendations for action in morally relevant situations in the real world. However, in moving from more abstract levels of ethical reasoning to such concrete recommendations, it seems possible to develop divergent or even contradictory recommendations for action in a given situation, even in relation to the same normative principle or norm. This may give the impression that such recommendations are arbitrary and thus not well founded. Against this background, we first want to show that ethical recommendations for action, while contingent to some extent, are not arbitrary if they are developed in an appropriate way. To this end, we examine two types of contingencies that arise in applied ethical reasoning using recent examples of recommendations for action in the context of the COVID-19 pandemic. In doing so, we refer to a three-stage model of ethical reasoning for recommendations for action. However, this leaves open the question of how applied ethics can deal with contingent recommendations for action. Therefore, in a second step, we analyze the role of bridging principles for the development of ethically appropriate recommendations for action, i.e., principles that combine normative claims with relevant empirical information to justify certain recommendations for action in a given morally relevant situation. Finally, we discuss some implications for reasoning and reporting in empirically informed ethics.

This article critically addresses the conceptualization of trust in the ethical discussion on artificial intelligence (AI) in the specific context of social robots in care. First, we attempt to define in which respect we can speak of ‘social’ robots and how their ‘social affordances’ affect the human propensity to trust in human–robot interaction. Against this background, we examine the use of the concept of ‘trust’ and ‘trustworthiness’ with respect to the guidelines and recommendations of the High-Level Expert Group on AI of the European Union.

In the context of rapid digitalisation and the emergence of consumer health technologies, neurotechnological devices are associated with further hopes, but also with major ethical and legal concerns.

Machine learning (ML) is increasingly used to predict clinical deterioration in intensive care unit (ICU) patients through scoring systems. Although promising, such algorithms often overfit their training cohort and perform worse at new hospitals. Thus, external validation is a critical – but frequently overlooked – step to establish the reliability of predicted risk scores to translate them into clinical practice. We systematically reviewed how regularly external validation of ML-based risk scores is performed and how their performance changed in external data.

This article is a preprint and has not been peer-reviewed. It reports new medical research that has yet to be evaluated and so should not be used to guide clinical practice.

Artificial intelligence (AI) applications are finding their way into our everyday lives. In the field of medicine, this involves the optimisation of diagnosis and therapy, the use of health apps of all kinds, the provision of personalised medicine tools, the possibilities of robot-assisted surgery, optimised hospital data management and the development and provision of intelligent medical products. But are these constellations actually examples of artificial intelligence? What does ‘intelligence’ mean and what conditions must be met in order to be able to speak of ‘intelligence’? These questions are answered very differently depending on the context and disciplinary anchoring, resulting in a wide-ranging and multi-faceted discourse. What is undisputed, however, is that the quality and quantity of the applications categorised as AI raise numerous legal, ethical, social and scientific questions that have yet to be comprehensively addressed and satisfactorily resolved. While the law usually lags behind practical developments when it comes to regulating technology, a paradigm shift is taking place in the field of AI: at the end of April 2021, the European Commission presented a draft ‘Regulation laying down harmonised rules on artificial intelligence (Artificial Intelligence Act) and amending certain Union acts’ 2 (hereinafter referred to as the ‘VO-E’). The following explanations present the regulatory approach on which this draft is based as well as the resulting central definitions, duties and mechanisms. In the interest of a holistic approach, ethical, medical-scientific and social considerations are also taken into account.

Probably nowhere are technology and people so close, so intimately and intimately intertwined as in the fields of medicine, therapy and care. Numerous ethical questions are therefore raised at the nexus of medicine and technology. This volume pursues the dual aim of illuminating the entanglements of medicine, technology and ethics from different disciplinary perspectives on the one hand and, on the other, taking a look at practice, at the realms of experience of people working in medicine and their interactions with technologies.

Introduction

Although machine learning classifiers have been frequently used to detect Alzheimer’s disease (AD) based on structural brain MRI data, potential bias with respect to sex and age has not yet been addressed. Here, we examine a state-of-the-art AD classifier for potential sex and age bias even in the case of balanced training data.

Methods

Based on an age- and sex-balanced cohort of 432 subjects (306 healthy controls, 126 subjects with AD) extracted from the ADNI data base, we trained a convolutional neural network to detect AD in MRI brain scans and performed ten different random training-validation-test splits to increase robustness of the results. Classifier decisions for single subjects were explained using layer-wise relevance propagation.

Results

The classifier performed significantly better for women (balanced accuracy ) than for men (). No significant differences were found in clinical AD scores, ruling out a disparity in disease severity as a cause for the performance difference. Analysis of the explanations revealed a larger variance in regional brain areas for male subjects compared to female subjects.

Discussion

The identified sex differences cannot be attributed to an imbalanced training dataset and therefore point to the importance of examining and reporting classifier performance across population subgroups to increase transparency and algorithmic fairness. Collecting more data especially among underrepresented subgroups and balancing the dataset are important but do not always guarantee a fair outcome.

Background:

Resources are increasingly spent on artificial intelligence (AI) solutions for medical applications aiming to improve diagnosis, treatment, and prevention of diseases. While the need for transparency and reduction of bias in data and algorithm development has been addressed in past studies, little is known about the knowledge and perception of bias among AI developers.

Objective:

This study’s objective was to survey AI specialists in health care to investigate developers’ perceptions of bias in AI algorithms for health care applications and their awareness and use of preventative measures.

Background: Accurate prediction of clinical outcomes in individual patients following acute stroke is vital for healthcare providers to optimize treatment strategies and plan further patient care. Here, we use advanced machine learning (ML) techniques to systematically compare the prediction of functional recovery, cognitive function, depression, and mortality of first-ever ischemic stroke patients and to identify the leading prognostic factors.

The use of artificial intelligence (AI), i.e. algorithmic systems that can solve complex problems independently, will foreseeably play an important role in clinical and nursing practice. In clinical practice, AI systems are already in use to support diagnostic and therapeutic decisions or to work prognostically. For several years now, systems for diagnostic support in radiology have been tested and discussed (Wu et al. 2020; McKinney et al. 2020). This is accompanied by the hope of faster, more efficient and more effective diagnoses and decisions as well as new therapy and prognosis options, especially in big data-based medicine (i.e. in medical areas that rely on the processing of large amounts of complex and unstructured data). In nursing practice, AI plays a role primarily in connection with the use of nursing robots such as Paro or Pepper, which are intended to independently animate people in need of care, communicate with them or take over certain nursing tasks (Bendel 2018).